Build Detections That Actually Work on AI.

AI-DE is Starseer's detection engineering platform — built so your team can design, test, deploy, and tune detections for the AI attack surface with the same rigor you apply to traditional threats.

.png?width=1200&height=750&name=Starseer%20Platform%20Laptop%20Images%20(1).png)

— Five Stages. Full Control.

Treat your detections

like production code.

Starseer maps to every phase of the detection engineering workflow, from the first rule sketch to retirement.

Design

Write versioned YAML detection artifacts

Express behavioral patterns, threshold logic, and contextual conditions using Starseer's AI-native schema.

Test

Validate against adversarial fixtures

Measure true positive rate, false positive rate, and latency before any detection ships.

Deploy

Ship through AIOps with quality gates

Automated checks block under-tested rules from production. Canary rollout supported.

Tune

Manage false positives without degrading coverage

Every suppression is versioned, scoped, and auditable. No silent coverage loss.

Retire

Flag stale rules before they become blind spots

Starseer surfaces coverage gaps as models change and attack patterns shift.

— Detections Are Code

Treat them that way.

Every detection in Starseer is a versioned, testable artifact — not a dashboard setting. Ship detections through the same review and CI/CD pipeline you use for everything else.

Version control native

Detections live in your repo. PR reviews, blame history, and rollback — exactly as you'd manage any other production code.

CI/CD pipeline integration

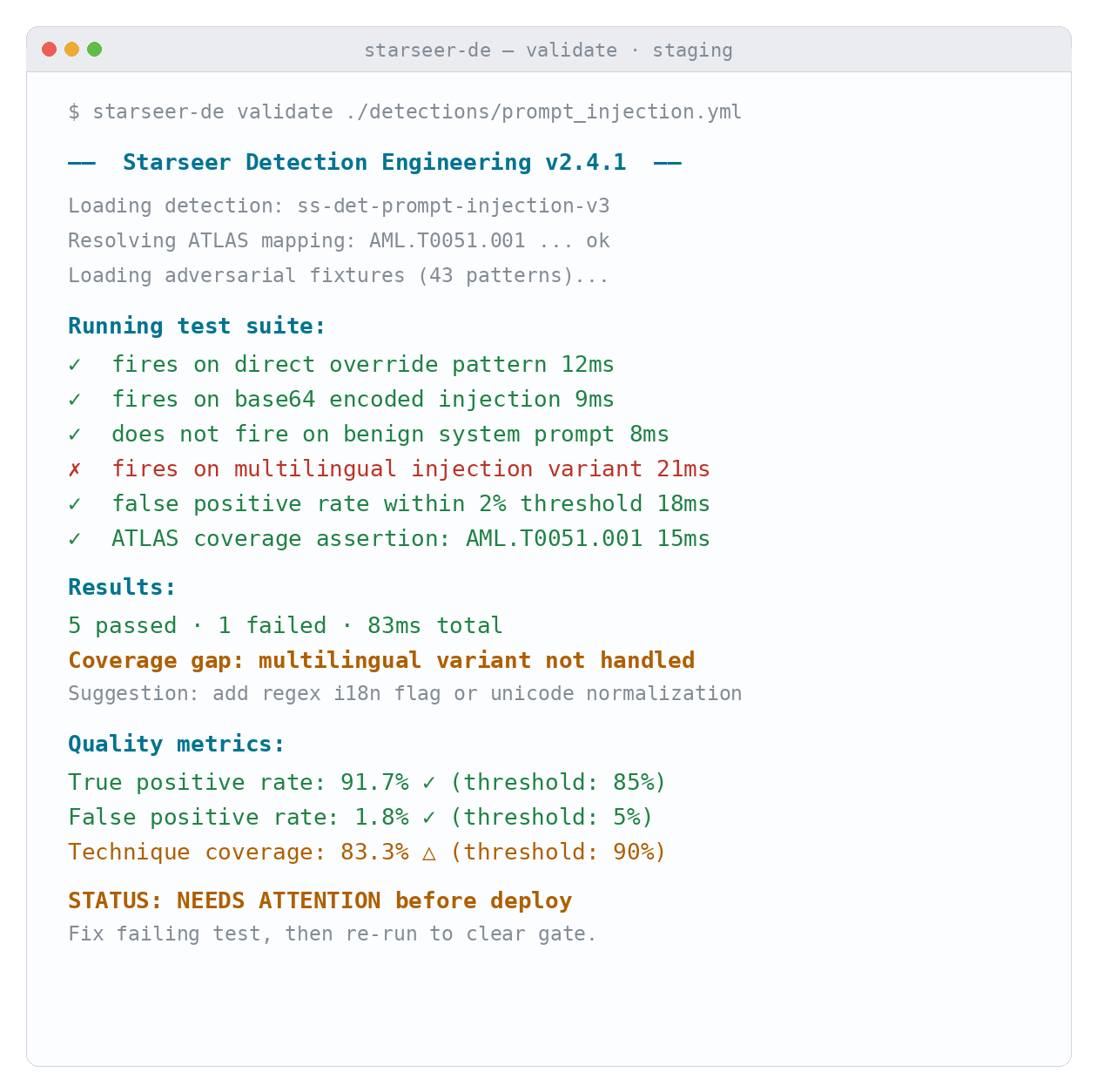

Starseer runs test suites on every commit. Under-tested or failing detections are blocked from deployment automatically.

Adversarial simulation fixtures

A curated library of AI attack patterns — prompt injection variants, LLMjacking behaviors, data poisoning triggers — usable as test fixtures against any detection you write.

— Coverage Map Analysis

See what you detect.

See what you don't.

Starseer maps every detection to a MITRE ATLAS tactic. The coverage gap view shows exactly where your detection stack is strong, partial, or blind — so you can prioritize what to build next.

— The Results

Elevate and protect

your business today.

300+

AI threat techniques covered

94%

Detection validation pass rate

3X

Faster detection iteration cycle

— Who This Is Built For

For the engineers

who build the sensors.

AI-DE is designed around the people who own detection quality — from the engineers writing rules to the architects designing the stack.

Detection Engineers

You write detection logic. You need a platform that treats your rules as code, not as dashboard config. AI-DE is built for the way you already work.

SOC Builders

Standing up an AI-aware SOC. Get detection coverage from day one without building the library from scratch.

Threat Hunters

Proactively looking for gaps. Starseer's coverage map shows you exactly where the AI attack surface is unguarded — before someone else finds it.

Security Architects

Designing the overall AI security stack. AI-DE is the detection engineering layer that feeds AI-EDR, closing the loop between production signal and detection improvement.

— Frequently Asked Questions

Common questions about AI-DE.

How is this different from writing Sigma or KQL rules for a SIEM?

Sigma and KQL describe patterns in structured event logs — file writes, network connections, authentication events. AI behavior doesn't produce structured events. It produces probabilistic outputs, semantic reasoning chains, tool invocation sequences, and agentic decision trees. None of those surface in a log aggregator in any form a traditional rule can key on. If you're trying to detect prompt injection or agent hijacking by writing KQL against your SIEM, you're not detecting the behavior — you're detecting the log noise around it, if anything shows up at all.

Starseer takes a different approach entirely: we use YARA rules for AI detection engineering. YARA is already the language security teams trust for expressive, precise pattern matching — and Starseer gives you a telemetry surface that YARA can actually operate against for AI behavior. The platform normalizes AI signals — prompt content, inference outputs, tool invocation sequences, decision path traces, output distribution shifts — into structured, inspectable records that your YARA rules run against directly.

The result is that you're writing in a rule language your team already knows, with the tooling and review practices you've already built around it, against an attack surface that no SIEM or traditional EDR can reach. You're not learning a proprietary detection schema or rebuilding your institutional knowledge from scratch. You're extending an existing practice into a new layer — the one that your current stack is blind to.

Can we integrate the detection pipeline with our existing CI/CD setup?

Yes. Starseer exposes a CLI and API that integrate with GitHub Actions, GitLab CI, Jenkins, and most standard pipeline tooling. Detections are tested on every push, and deployments are gated on pass/fail status — the same way a failing unit test blocks a code merge.

You configure the quality thresholds per detection or per rule set: minimum true positive rate, maximum false positive rate, required ATLAS technique coverage assertions. Starseer enforces them automatically. Rules that don't meet threshold don't ship. There's no manual sign-off bottleneck and no way for an under-tested detection to reach production without someone explicitly overriding the gate and leaving an audit trail.

What's in the adversarial simulation library, and how do I use it to test my detections?

The library is a curated set of AI attack fixtures mapped to the MITRE ATLAS technique set. Current coverage includes prompt injection variants — direct, indirect, multi-turn, base64-encoded, and multilingual — agent hijacking patterns, LLMjacking resource abuse behaviors, data poisoning trigger signatures, model tampering indicators, and covert agentic workflow patterns.

Each fixture is a structured test case: an input condition, an expected firing behavior, and a set of contextual modifiers. You reference fixtures directly in your detection's test block, or run the full library against a detection to measure coverage breadth. The library is updated continuously as new attack patterns are documented — your existing detection tests automatically gain new coverage assertions without you rewriting any rules. A detection that passed last month can surface a new gap this month if a new fixture exposes it, which is exactly the behavior you want.

How do we manage false positive suppression without silently degrading coverage?

This is the most common way detection programs deteriorate — suppressions accumulate, nobody audits them, and the rule that looked like it had 90% coverage six months ago is now effectively firing on 40% of the surface it was designed for. Starseer is built to prevent this specifically.

Every suppression is a versioned artifact attached to a specific detection rule. It requires a written rationale, a defined scope, and an expiration date. Suppressions cannot silently expand — scope changes require a new commit. On every suppression commit, Starseer generates a coverage diff report showing the net coverage impact: what you're choosing not to fire on, and how much of the intended detection surface that represents. Expired suppressions are flagged automatically and surface in your next pipeline run as warnings. You always have an auditable record of every exception, when it was made, why, and whether it's still intentional.

How do we know our detections will actually fire under real conditions, not just in test?

Two mechanisms. First, historical event replay: Starseer lets you run any detection against a window of real production telemetry captured by AI-EDR, so you can measure how a rule would have performed against actual observed behavior — not simulated inputs. This surfaces false positive rates that unit tests miss because production inputs are messier and more varied than anything you'd construct by hand.

Second, the adversarial simulation library is continuously updated to reflect real-world attack patterns as they're documented in the wild. A detection that passes today's fixtures is being tested against techniques that have actually been observed, not just theorized. Between replay against real data and simulation against real attack patterns, you get a materially more honest picture of production performance than any pure unit testing approach can give you. Neither replaces the other — the strongest validation posture uses both.

Can AI-DE and AI-EDR be used independently?

Yes, and there are good reasons to use either without the other. AI-DE alone gives you a detection engineering workbench — you can build, test, and maintain a detection library without a live AI-EDR deployment. Teams that already have runtime monitoring infrastructure sometimes use AI-DE specifically to improve the quality of detections they push to other platforms.

AI-EDR alone gives you runtime monitoring and response using Starseer's built-in detection library — fully functional without custom rule authoring.

That said, the two products compound significantly when used together. AI-EDR generates the production telemetry that feeds historical replay in AI-DE. Detection quality metrics from AI-DE inform tuning decisions in AI-EDR. False positive signals from production flow back into suppression management in the engineering workflow. The loop between production signal and detection improvement is where most of the long-term value accumulates — closing that loop manually, across two separate platforms, is exactly the friction AI-DE and AI-EDR are designed to eliminate together.

How does detection engineering support AI governance and compliance?

It does, but that's a side effect of doing the engineering work well — not a goal you should be optimizing for directly. If your detections are high-quality, versioned, tested against documented attack techniques, and shipped through a governed pipeline, the compliance artifacts generate themselves: ATLAS technique mappings, test result histories, coverage reports, suppression audit trails, and deployment logs.

Starseer surfaces these as exportable reports aligned to NIST AI RMF, ISO 42001, and the EU AI Act. The framing we'd suggest to auditors and regulators is not "we have a compliance tool" — it's "our detection engineering practice produces evidence of control effectiveness as a natural output." That's a materially stronger position than a dashboard that generates PDFs on demand, and it holds up to scrutiny in a way that after-the-fact reporting typically doesn't.

— Get Started

Your AI attack surface

has no detections yet.

Start building the detection library your AI environment needs — before a threat tests whether it exists.